Red Hat Enterprise Linux 9 (RHEL 9) and Docker don’t get along very well sometimes. It turns out, running a container that requires older iptables (and not nftables) can be a problem.

This is a problem for other Operating Systems too that use nftables, but let’s focus on RHEL 9 today.

Problem with running a newer OS (like RHEL 9)

Wow, RHEL 9 is using a modern kernel and toolchain. Ok, now I can continue.

Some newer Linux Operating Systems are moving away from iptables and changing to nftables. This can be a problem when an app is expecting iptables. Normally the OS can load the older iptables, but you can run into problems when running containers.

Error from GitLab Runner

It’s even more fun trying to figure out what’s going on when running on a GitLab Runner.

This is the error from a GitLab Runner job:

ERROR: error during connect: Get https://docker:2376/v1.40/info: dial tcp: lookup docker on 8.8.8.8:53: server misbehavingError when running Docker container

This is the error when running a container using Docker:

# docker run --rm -it --privileged --name dind docker:19.03-dind

...

INFO[2023-03-18T21:03:46.764203869Z] Loading containers: start.

WARN[2023-03-18T21:03:46.774269934Z] Running iptables --wait -t nat -L -n failed with message: `iptables v1.8.4 (legacy): can't initialize iptables table `nat': Table does not exist (do you need to insmod?)

Perhaps iptables or your kernel needs to be upgraded.`, error: exit status 3

INFO[2023-03-18T21:03:46.801647539Z] stopping event stream following graceful shutdown error="<nil>" module=libcontainerd namespace=moby

...

failed to start daemon: Error initializing network controller: error obtaining controller instance: failed to create NAT chain DOCKER: iptables failed: iptables -t nat -N DOCKER: iptables v1.8.4 (legacy): can't initialize iptables table `nat': Table does not exist (do you need to insmod?)

Perhaps iptables or your kernel needs to be upgraded.

(exit status 3)So here we can see the problem. Our container is trying to use iptables and it’s not working.

But, if you run a newer Docker in Docker (DinD) container, it works! And then the old container also starts to work (this is, until a reboot of the host). The culprit? iptables, and a clever trick in newer DinD containers to workaround this issue..

Enable iptables from inside a Container

If you run a container as privileged, you can actually trigger the host OS to load the iptables kernel modules!

This was found from Docker in Docker (DinD) adding a custom modprobe script (called from the entrypoint script), which is essentially just running this command:

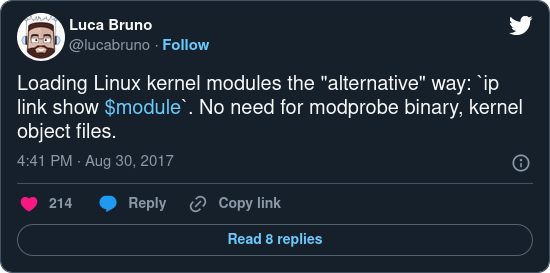

# ip link show ip_tablesThe Docker in Docker modprobe script gives credit to:

Enable iptables on Red Hat Enterprise Linux 9

But if your container isn’t using this ‘ip link show ip_tables’ trick, the container will have problems. You need to enable the iptables legacy module on your host OS.

To have iptables loaded and ready to go, you can also run the above trick directly on the host. But the “proper” way is to use modprobe when the OS boots.

# modprobe ip_tablesThat will dynamically enable the older iptables. But after a reboot the change is gone, so to make a persistent change:

echo ip_tables > /etc/modules-load.d/ip_tables.confReboot and check:

# lsmod|grep -E "^ip_tables|^iptable_filter|^iptable_nat"

ip_tables 28672 0Now the older containers will also work (that need iptables (legacy).

Conclusion

Red Hat doesn’t recommend running Docker (instead they recommend Podman). Probably for these reasons. And I wonder if this problem also has something to do with the underlying and undocumented way that Docker uses iptables INPUT chain.